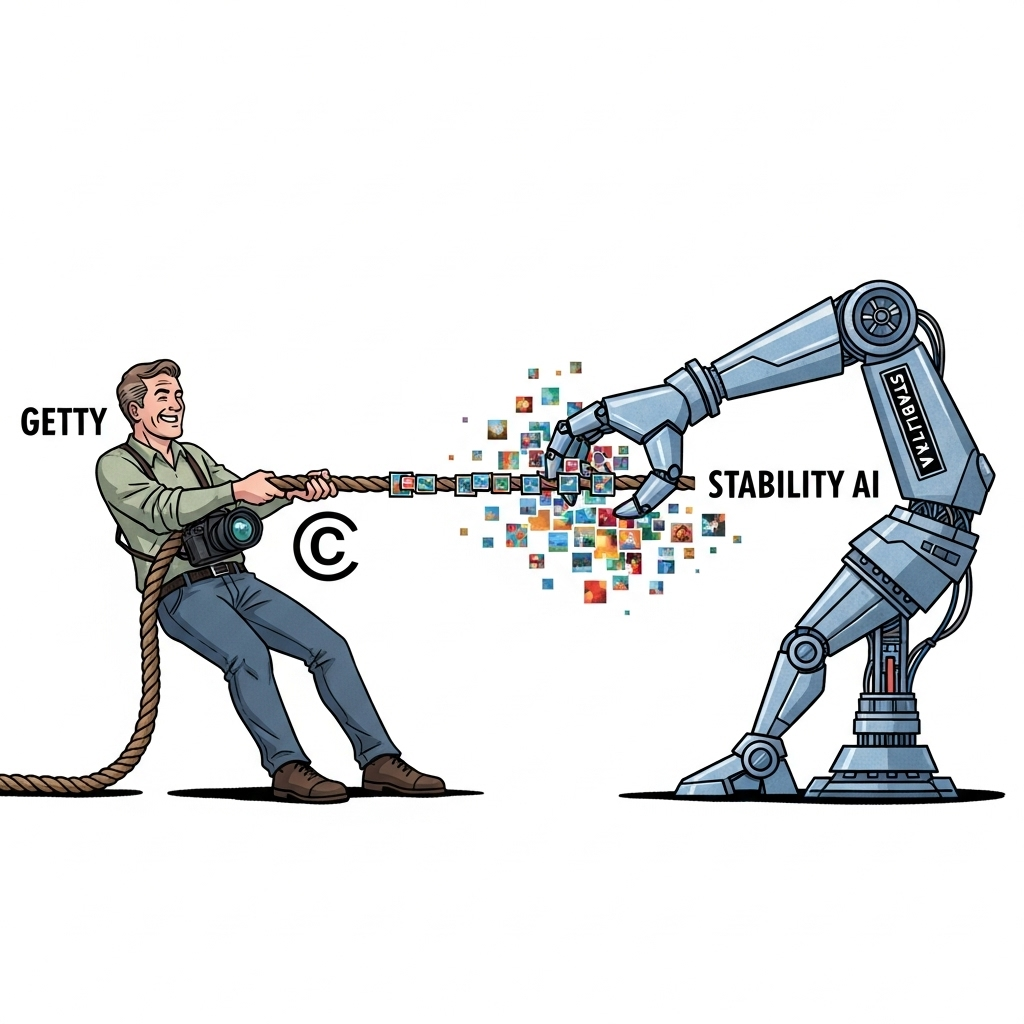

Getty vs. Stability AI: The Copyright Case That Could Reshape Generative AI

A legal fight that will decide who owns the raw material of artificial intelligence

On June 9, 2025, the UK High Court in London began hearing a case that may define the legal boundaries of AI training data for years to come. Getty Images, one of the world’s largest stock photography agencies, is suing Stability AI, creator of Stable Diffusion, for allegedly scraping over 12 million copyrighted images without permission to train its generative image model.

While the courtroom is local, the stakes are global. This case marks the first major judicial test of whether training AI models on copyrighted data constitutes fair use or infringement—and the outcome could upend the legal foundation on which much of the generative AI industry has been built.

The Core Legal Questions

At its heart, the case asks two questions:

- Is using copyrighted content to train AI systems permissible under UK law?

- Where does legal responsibility fall when AI development is globally distributed?

Getty claims Stability’s training activities represent willful and large-scale copyright infringement. It’s seeking up to $150,000 per infringed work—damages that, across 12 million images, could reach an unprecedented $1.8 trillion. Stability AI’s defense rests on jurisdiction and the nature of training data use. It argues:

- The training occurred in the U.S., not subject to UK copyright jurisdiction.

- AI systems don’t store or reproduce the copyrighted works; they extract abstract patterns, a process they claim should qualify as transformative use.

Justice Joanna Smith has already raised credibility concerns regarding Stability’s jurisdictional claims, citing inconsistencies in executive testimony around where development took place and who was involved.

The case features over 63 distinct legal issues and more than 78,000 pages of evidence—an indication of just how complex this intersection of technology and copyright law has become.

The De Facto Standard: Web-Scraped Data

The reality is that much of the modern AI ecosystem has been built on web-scraped data. Large datasets like LAION-5B, Common Crawl, and The Pile have fueled models across text, image, and code domains. They were assembled through broad-scale crawling of publicly accessible web pages—often without explicit permission.

This worked when AI was still in the research phase. But with models like ChatGPT, Midjourney, and Stable Diffusion now driving multi-billion-dollar revenue streams, the legal exposure from using unlicensed data has escalated dramatically.

The Getty lawsuit is one of more than 150 active copyright cases globally. Others include:

- The New York Times vs. OpenAI and Microsoft

- Creators vs. AI music generators like Suno and Udio

- Author and artist lawsuits challenging LLM and image model training

And critically, earlier this year, the U.S. court ruling in Thomson Reuters v. Ross Intelligence rejected the fair use defense in an AI context—suggesting courts may not be as sympathetic to broad "pattern learning" arguments as the tech industry assumed.

How Stable Diffusion Trains—and What It Remembers

Understanding the mechanics is critical to evaluating liability. Stable Diffusion is a latent diffusion model. It:

- Converts text into vector representations.

- Adds and removes noise to teach the model how to generate images from randomness.

- Learns statistical associations between words and visual forms—not literal pixel copies.

This technique allows high-efficiency inference, compressing training inputs into abstract representations. Proponents argue it’s akin to how humans learn visual relationships.

But edge cases exist. Research led by Nicholas Carlini has shown that under certain conditions, diffusion models can reproduce specific images—especially those duplicated frequently in training data. So while not the norm, memorization remains a technical vulnerability with legal consequences.

The legal interpretation now hinges on whether the typical use—pattern extraction—is enough to qualify as fair use, or whether any capacity for memorization renders the entire process infringing.

Implications for the AI Industry

A verdict in Getty’s favor would signal a fundamental shift:

- Training data would need to be licensed, not scraped.

- Liability could scale with model size and training volume.

- Only the largest players—those with existing publisher partnerships—would be able to absorb the costs.

Companies like OpenAI, Google, Meta, and Microsoft have already started hedging against this risk:

- OpenAI has spent over $250M licensing content from publishers like News Corp and Axel Springer.

- Google and Meta have signed multi-million-dollar deals with Shutterstock and Getty itself.

- Adobe and Shutterstock offer indemnified, “ethically trained” models.

The emerging consensus: regardless of the final legal ruling, industry leaders are proactively adapting. What began as a legal defense strategy has become a commercial differentiator.

The Regulatory Patchwork

The legal uncertainty isn’t just about one court. AI companies must now navigate a fractured global landscape:

- UK and U.S.: Case-by-case judicial tests, often inconsistent across jurisdictions.

- EU: The AI Act mandates detailed summaries of copyrighted training data and grants creators the right to opt out.

- Japan: Explicitly permits commercial use of copyrighted material for training—making it a favored destination for model development.

Some companies may turn to “jurisdiction shopping,” training in Japan or similar environments while deploying globally. But with extraterritorial enforcement (especially from the EU), that strategy is increasingly risky.

Long-Term Market Impact

Whether through licensing or regulatory compliance, the industry is heading toward more structured, permission-based data practices. This will likely:

- Increase costs and raise the bar to entry.

- Accelerate consolidation around major tech players.

- Spur innovation in synthetic data generation and copyright-clean datasets.

Synthetic training methods and partnerships with publishers could serve as alternatives—but they may reduce model performance, at least initially.

Final Thoughts

The Getty vs. Stability AI trial is a litmus test—not just of legal liability, but of how society balances the rights of creators with the needs of technical innovation.

The AI community is watching closely. Courts may side with creators, affirming that permission must precede progress. Or they may uphold transformative use and pattern learning as fair game. But the broader shift is already underway: licensing, consent, and data provenance are becoming core to model development.

For AI companies, it’s no longer just about building better models. It’s about building responsibly—within legal boundaries that are still being written, one ruling at a time.